RIT is buzzing with a fresh batch of AI and VR ideas, and the student crew behind AIRLab is leading the charge. The scene feels like a lively workshop where ideas bounce around the room, and every ping of a new prototype seems to promise something big. AIRLab teams are weaving LLM-enhanced AR/VR into job training for neurodivergent individuals, aiming to make learning feel natural rather than overwhelming. The vibe is practical and hopeful, like a group of makers hacking hard to help people through everyday tasks.

In the mix, immersive experiences take center stage as researchers build LLM-powered virtual avatars for soft skill and communication training tailored to autistic individuals. Think you’re just chatting with a bot? These avatars adjust in real time, giving hints and context as conversations unfold. It’s not science fiction; it’s real-time, context-aware guidance designed for customer service roles, where tone and patience matter. The team, led by Director Roshan Peiris, keeps the pace steady, but there’s a spark of curiosity in every meeting.

Collaboration pages read like a map: RIT, University of Maine, University of Moratuwa, Heritage Christian Services, all chipping in to push this frontier forward. The core themes of Immersive Accessibility shape the projects, from making immersive tech accessible to using it for accessibility gains, to pushing human capabilities via HCI. It’s all about empowering experiences, and you can sense that mission in the air.

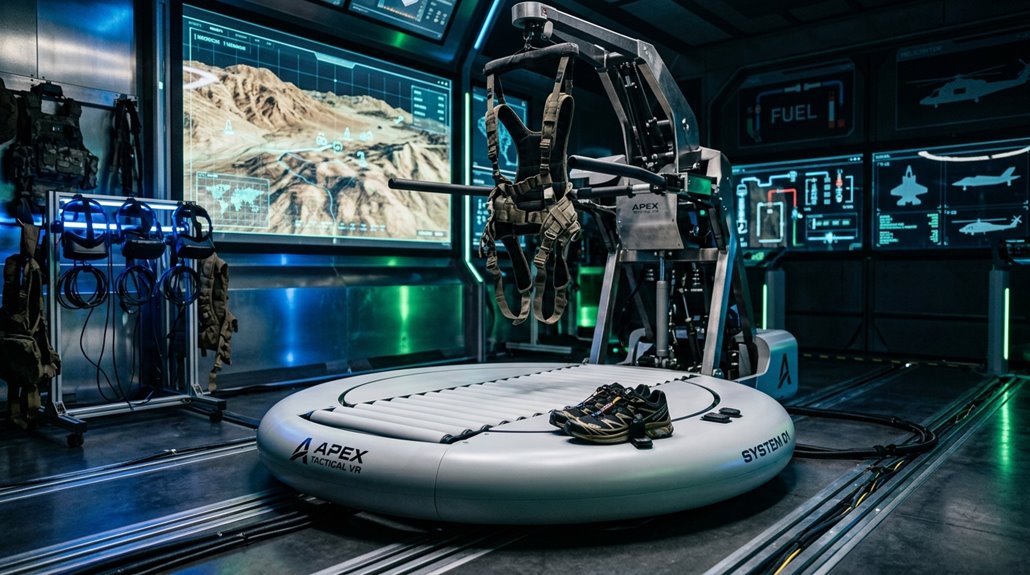

Job training projects blend virtual reality with real-world needs. Soft and hard skills for autism job training get practiced in spatially aware VR-LLM environments, and public speaking practice is being tested with VR-LLM agents. A VR chatbot adds a friendly face to communication skills training, and real-world deployment is on the horizon thanks to partnerships with New York State job training organizations.

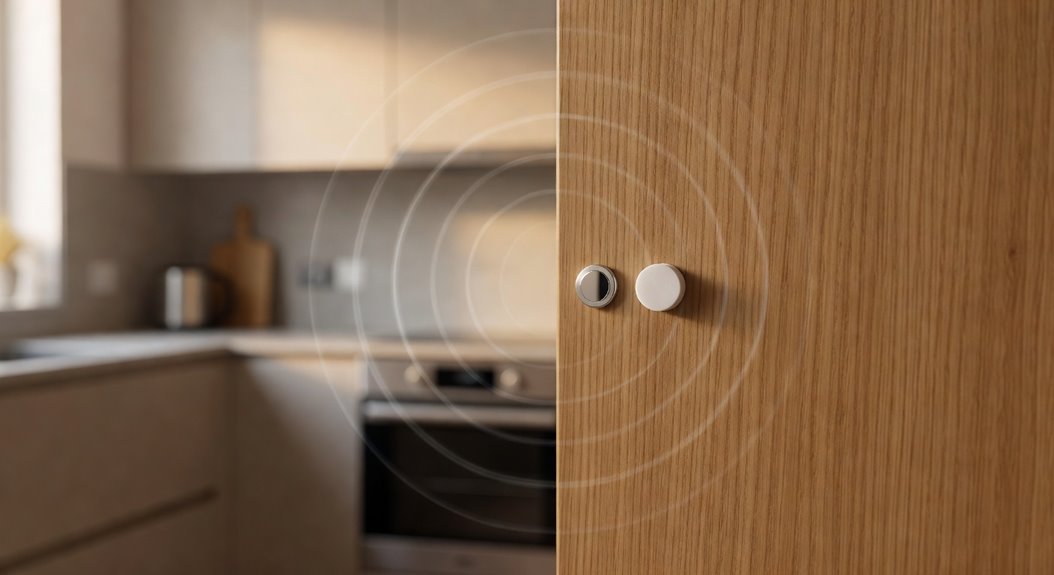

Even the studio’s past sits nearby, with XR simulations of 19th-century presses and a Steam release earlier this year. XR technologies have long lived at RIT, used to stage virtual theater and to study accessibility for vision impairments. It’s a lively mix of learning, testing, and imagining what comes next, all in a space where AI innovations feel almost friendly. Some of these immersive environments are even being explored alongside voice-controlled platforms, as Alexa-compatible smart home devices now number over 100,000, hinting at how deeply AI assistants have embedded themselves into training and accessibility ecosystems.

References

- https://www.rit.edu/spotlights/air-lab-researchers-combine-ar-vr-and-ai-advance-accessibility

- https://www.rit.edu/news/immersive-technologies-virtually-endless-possibilities

- https://www.rit.edu/ai/project-spotlight

- https://www.rit.edu/news/researchers-develop-manufacturing-training-will-include-ai-and-virtual-reality-technology

- https://www.rit.edu/facilities/rit-center-human-aware-ai

- https://www.rit.edu/framelesslabs/2025-frameless-xr-showcase