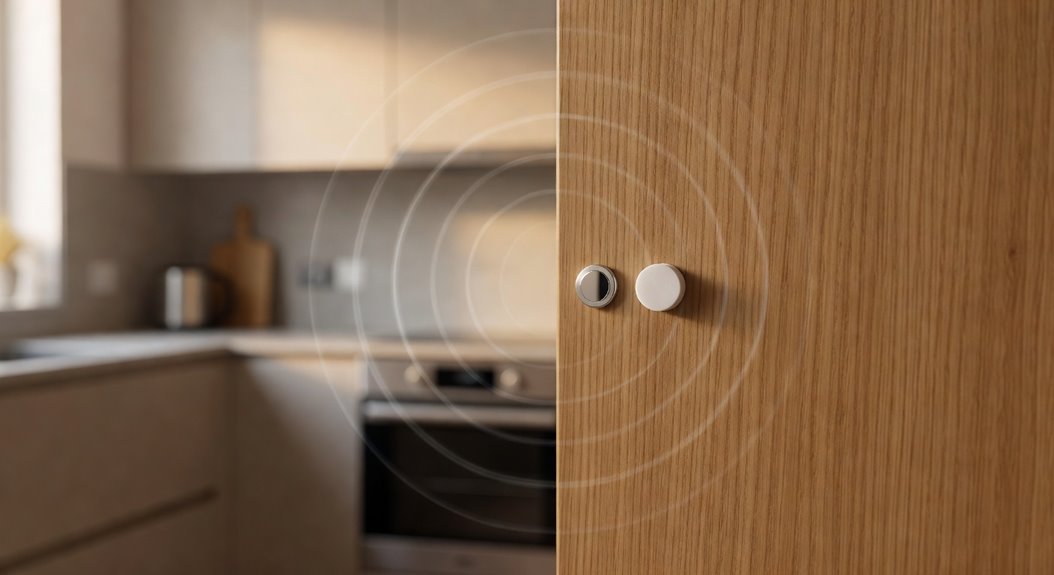

Ditching cloud‑based voice assistants feels a bit like finally breaking up with a clingy roommate who never stops talking. The new partner is a tiny local AI that lives on the device, and it actually respects personal space. Instead of sending every “Hey, what’s the weather?” to a distant server, the request stays in the house, processed by a chip that never leaves the room. That shift makes data security feel like a solid barrier on the door, not a flimsy screen door that anyone can push open.

Voice privacy improves because the microphone’s ear is only listening for the wake word, then quietly stops, no longer whispering conversations into a cloud that could be hacked. With local processing, user control becomes a real thing: a simple toggle can mute the mic, delete the cache, or even shut down the whole assistant, something that was impossible when the voice lived in the sky. Biometric risks shrink dramatically, because the voiceprint never leaves the device so scammers can’t snatch a digital fingerprint and use it for impersonation. Digital trust builds up, as the user finally feels safe saying “I’m hungry” without fearing a data broker turning that into a targeted ad.

Local voice assistants give real control, mute‑on‑demand, and keep voiceprints on‑device, dramatically cutting biometric and privacy risks.

The old cloud model was a perfect playground for hackers. Large amounts of personal data were constantly streamed to remote servers, and any breach could expose private conversations, financial details, and even health information. Voice data, being a biometric identifier, could be repurposed for identity theft, allowing cybercriminals to impersonate a user in a CEO fraud scheme. Microsoft’s VALL‑E showed how a three‑second clip could be turned into a convincing clone, meaning a thief could steal a voice with barely a whisper of sound, then use it to access accounts or approve transactions. The risk of voice spoofing turned everyday devices into open doors for fraud.

Always‑on microphones added another layer of annoyance. Devices would mishear a word, record a snippet, and send it to the cloud, sometimes broadcasting a private joke to a stranger’s phone. One Amazon Echo incident even sent a couple’s intimate conversation to a contact, proving that “always listening” can be always embarrassing. The accidental recordings created a constant feeling of being watched, eroding confidence in the technology.

Beyond privacy, the cloud turned voice assistants into data‑mining machines. Usage patterns, location, and preferences were harvested to build detailed profiles, then sold to advertisers without consent. Regulators slapped fines of up to 746 million euros on companies that ignored GDPR, and users reported that 40 percent felt uneasy about who was listening. Third‑party skill stores added the final straw: malicious apps slipped through, grabbing data, installing backdoors, and compromising the entire ecosystem.

The combination of these threats made the decision to go local feel like a relief, a clean break from a relationship that was more toxic than convenient. The same vulnerabilities that plague cloud‑connected cameras, where unauthorized access to feeds has been documented across multiple device generations, illustrate how any internet‑linked device can become an entry point for attackers. The result? A quieter home, a safer voice, and a digital life that finally respects the user’s boundaries. Quantum‑ready encryption further mitigates future risks. Insider threats account for 60% of data breaches.

References

- https://blog.isecauditors.com/en/2017/09/privacy-and-security-in-voice-assistants-part2

- https://pmc.ncbi.nlm.nih.gov/articles/PMC8036736/

- https://www.kardome.com/resources/blog/voice-privacy-concerns/

- https://www.cyber.gc.ca/en/guidance/security-considerations-voice-activated-digital-assistants-itsap70013

- https://privacymatters.ubc.ca/news/privacy-implications-voice-assistants-faculty-and-staff

- https://www.termsfeed.com/blog/voice-assistants-privacy-issues/

- https://community.home-assistant.io/t/privacy-concerns-using-an-llm-with-assist/939941